Question development history for 2018

Census: Sexual Orientation

Overview

Summary

Key driver for question development

New Content

Data users have indicated that a

question on sexual orientation needs to

be able to identify specific population

groups within the wider LGBTIQ

population. This is in order to firstly

identify the size of these population

groups within New Zealand and

secondly meet the health and service

provisioning needs for these specific

population groups for relevant

organisations.

Quality priority level:

3

Outcome from question development <maybe

Cognitive testing

we update this section in 2017>

Usability testing

Mass completions

Volume test

1 – Purpose

The purpose of this document is to capture the question development process for the Sexual

orientation variable, including findings from waves of testing conducted in 2015-2017.

The 2018 variable specification provided by the Customer Needs and Data (content) Team provides

the background and scope of this variable.

This document is intended for use within Statistics New Zealand.

2 – Background

The information sought from this variable is to collect data on aspects of respondent’s self-reported

sexual identity, from the census usually resident population count aged 15 Years and over (ie New

Zealand Adults). This is in order to meet the needs of data users for information on sexual minorities

in New Zealand.

The information need for this variable is to identify the prevalence (number) of individuals who

identify as non-heterosexual within the New Zealand population. This includes lesbian, gay, bisexual,

and other non-heterosexual sexual identities.

Data users have indicated that a question on sexual orientation needs to be able to identify specific

population groups within the wider LGBTIQ population (ie. gay, bisexual etc.) by itself or in

combination with other census variables (most notably sex and any potential gender identity

question). This is in order to firstly identify the size of these population groups within New Zealand

and secondly meet the health and service provisioning needs for these specific population groups for

relevant organisations (ie. health service providers involved in STI and HIV prevention). There is a

strong desire for baseline population counts of the lesbian, gay and other sexual minorities in order

for decisions affecting these population groups to have a strong evidence base than is currently

available.

Currently there is no official definition of sexual orientation for use within statistical surveys in New

Zealand. If data on sexual orientation/identity is to be collected in the 2018 Census, a definition for

sexual orientation/identity will need to be developed.

Previous work undertaken for the OSS has given some background to developing an official

definition of sexual orientation for statistical surveys in New Zealand:

Definitions of sexual orientation (from Pega, F., Gray, A., & Veale, J. (2010) p.56)

Given that the umbrella concept of sexual orientation is defined by three key measurement

concepts, we propose that sexual orientation should be treated as a statistical topic, with three

measurement concepts: sexual attraction, sexual behaviour, and sexual identity. The following

working definitions for the statistical topic of sexual orientation and the associated measurement

concepts are proposed:

Statistical Topic

Proposed Working Definition

Sexual Orientation

Sexual orientation is defined by three key

concepts: sexual attraction, sexual

behaviour, and sexual identity. The

relationship between these components is

that sexual orientation is based upon

sexual attraction and that sexual attraction

can result in various sexual behaviours and

the adoption of sexual identities. The

three key concepts are related, but not

necessarily congruent continuous

variables, each of which can independently

change over time and by social context.

Measurement Concept

Proposed Working Definition

Sexual Attraction

“Attraction towards one sex or the desire

to have sexual relationships or to be in a

primary loving, sexual relationship with

one or both sexes”

(Savin-Williams, 2006, p. 41).

Sexual Behaviour

“Any mutually voluntary activity with

another person that involves genital

contact and sexual excitement or arousal,

that is feeling really turned on, even if

intercourse or orgasm did not occur”

(Laumann, Gagnon, Michael, & Michaels,

1994, p. 67).

Sexual Identity

“Personally selected, socially and

historically bound labels attached to the

perceptions and meanings individuals have

about their sexuality”

(Savin-Williams, 2006, p. 41).

Early scoping work on a development of a Sexual Orientation statistical standard for the OSS has

indicated that sexual identity is the concept that would be most relevant and suitable for general

purposes.

This drew on

work done by the ONS which used the following definition and rationale:

What is sexual identity?

Self-perceived sexual identity is a subjective view of oneself. Essentially, it is about what a person is,

not what they do. It is about the inner sense of self, and perhaps sharing a collective social identity

with a group of other people. The question on sexual identity is asked as an opinion question, it is up

to respondents to decide how they define themselves in relation to the four response categories

available. It is important to recognise that the question is not specifically about sexual behaviour or

attraction, although these aspects might relate to the formation of identity. A person can have a

sexual identity while not being sexually active. Furthermore, reported sexual identity may change

over time or in different contexts (for example, at home versus in the workplace).

Why measure sexual identity rather than sexual orientation?

No single question would capture the full complexity of sexual orientation. A suite of questions

would be necessary to collect data on the different dimensions of sexual orientation, including

attraction, behaviour and identity, and to examine consistency between them at the individual level.

Although legislation refers to sexual orientation, research during question development deemed

sexual identity the most relevant dimension of sexual orientation to investigate given its relation to

experiences of disadvantage and discrimination. Testing showed that respondents were not in

favour of asking about sexual behaviour in a social survey context, nor would it be appropriate in

general purpose government surveys.

2.2 Where this data comes from

This information would be collected on the individual form in a new question. Its placement is likely

to be later in the form (separate from the sex question and next to any potential gender identity

question) and in the sections for those aged 15 or older.

Discussion of potential question formats informed by those used by other collections is below:

Definitions of sexual orientation (from Pega, F., Gray, A., & Veale, J. (2010) p.56) recommended a

question of the following format for use in the OSS (in personal interviews)

ASK ALL AGED 16 OR OVER

Which of the options on this card best

describes how you think of yourself?

Please just read out the letter next to the

description.

(letter) Heterosexual or Straight

(letter) Gay or Lesbian

(letter) Bisexual

(letter) Takatāpui

(letter) Fa’afafine

(letter) Other

(Spontaneous DK/Refusal)

Sexual Identity has also been used as a concept and collected in the New Zealand Health Survey

from 2014/15 (in interviews)

in their sexual and reproductive health module (pg 70). The following

question was asked:

Which of the following options best describes how you think of yourself?

1. Heterosexual or straight

2. Gay or lesbian

3. Bisexual

4. Other

.K Don’t know

.R Choose not to answer

Research done in the USA for the purpose of collecting sexual orientation and gender identity data

for electronic health records and in healthcare settings has also recommended a similar format

which was indicated as a format which would produce necessary data by the NZ Aids Foundation in

their submission.

Sexual orientation

Do you think of yourself as:

_ Lesbian, gay, or homosexual

_ Straight or heterosexual

_ Bisexual

_ Something else, please describe: ___________

_ Don’t know

The content team has recommended that initial testing of collection of sexual identity data follow

the format of the questions recommended by the ONS used in the New Zealand Health Survey.

Which of the following options best describes how you think of yourself?

1. Heterosexual or straight

2. Gay or lesbian

3. Bisexual

4. Other

.K Don’t know

.R Choose not to answer

This has been chosen instead of the recommendation from the previous research by Pega, Gray and

Veale in New Zealand which included tick boxes for Takatāpui

and Fa’afafine for a number of reasons.

- The terms Takatāpui and Fa’afafine indicate a spectrum of identity which is broader than sexual

identity and cross into other aspects of identity including gender identity.

- The question proposed by the previous New Zealand research was intended for personal

interviews. If a question on sexual identity is included in the census, a question format that uses less

space is ideal given the constraints of the paper questionnaire form.

- The New Zealand Health Survey team indicated that cognitive testing for their sexual and

reproductive health module indicated that many people were not familiar with the terms of

Takatāpui and Fa’afafine or interpreted them in different ways.

- The question format being tested will still allow for collection of individuals who wish to self-

identify this way in the sexual identity question through the ‘other’ write in box.

3 – Design differences between paper and internet forms

n/a

4 – Findings from testing (or review) and rationale for revision

These tables summarise in chronological order the versions of this question set that were tested (or

reviewed), along with brief findings, and rationale for revision.

Reasons for variables being omitted from a sprint may include: the content need or question design

is not ready, or the variable is not a focus for that sprint (eg it is not suited to the target

respondents), or the sprint is not a test of content.

Summary of sprints this variable has been tested in, plus testing type and mode type:

Sprint 4; cognitive testing and mass completions of paper forms

Sprint 5; cognitive testing and mass completions of paper forms

Census programme test, July 2016

Sprint 7; cognitive testing and mass completions of paper forms

Sprint 8; cognitive testing and mass completions of paper forms

Sprint 9A; cognitive testing and mass completions of paper forms

SPRINT 4; COGNITIVE TESTING AND MASS COMPLETIONS OF PAPER FORMS

March 2016

Christchurch and Wellington

Aim:

The primary objective of the testing is to provide recommendations to inform a Go/No Go

decision on future development and testing of proposed 2018 Census content.

Test question with targeted sections of the general public to assess understanding/potential

for confusion/potential for drop off/potential for offence.

Respondents The cognitive test participants included members of the public, students, and people with step

family. The mass completion test participants included rural fire fighters, secondary students, and

tertiary students (including young parents’ college and ESOL students).

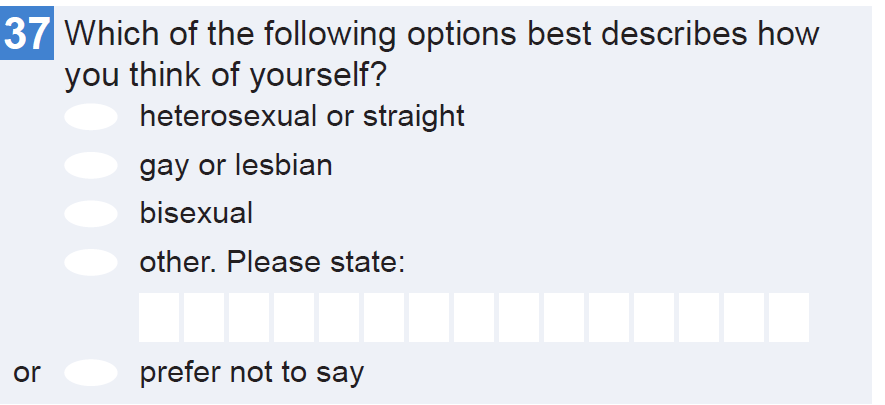

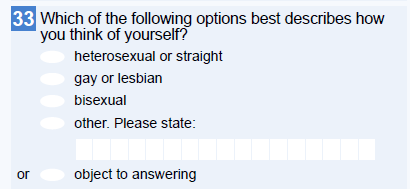

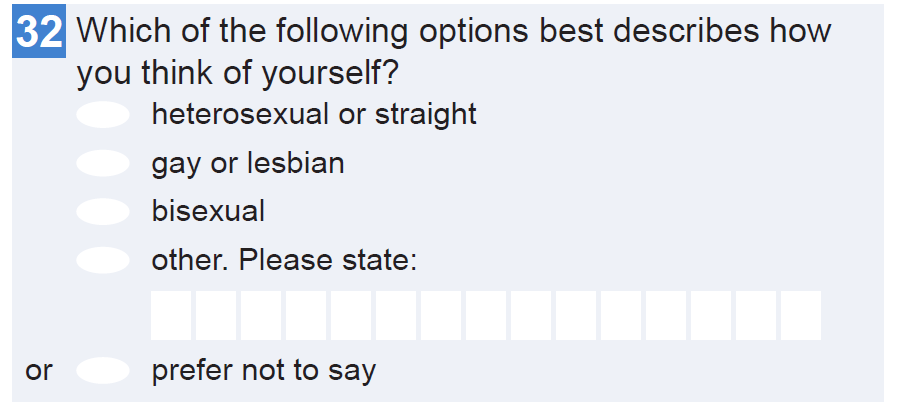

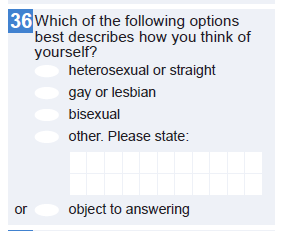

The question design tested in sprint 4 was:

SPRINT 4, COGNITIVE TESTING AND MASS COMPLETIONS OF PAPER FORMS – FINDINGS

English as second language and didn’t understand “heterosexual”

Male respondent happy with term “heterosexual” but thought term straight was a bit

prejudiced

Weird that you could select “prefer not to say” for this but not for gender question

Some uncomfortableness (surprise at inclusion), and giggling at the question

“Oh wow!, really?”

a-sexual option suggested, considered to be as prevalent as bisexual

SPRINT 5, COGNITIVE TESTING AND MASS COMPLETIONS OF PAPER FORMS

SPRINT 5, COGNITIVE TESTING AND MASS COMPLETIONS OF PAPER FORMS

March 2016

Wellington and Christchurch

Aim: General Public

The primary objective of the testing is to provide recommendations to inform a Go/No Go

decision on future development and testing of proposed 2018 Census content.

Targeted LGBTQI testing

Test question with targeted sections of the general public to assess

understanding/potential for confusion/potential for drop off/potential for offence.

Test with gay/lesbian/bisexual respondents to assess functioning of question in the New

Zealand context, and as a paper self-complete.

Respondents As with the previous sprint, cognitive test participants included members of the public, students,

and people with step family. The mass completion test participants included secondary and

tertiary students, Age Concern, retirement village residents, and a private workplace.

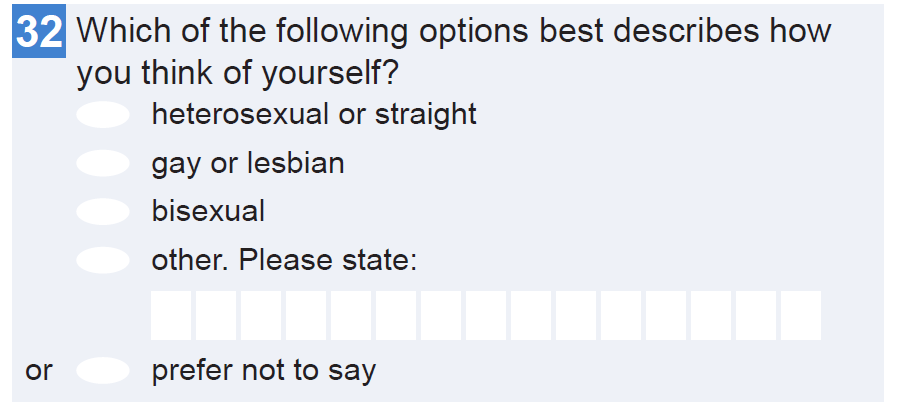

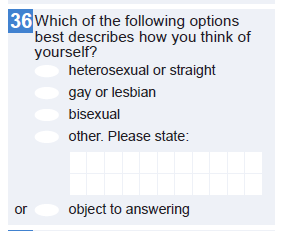

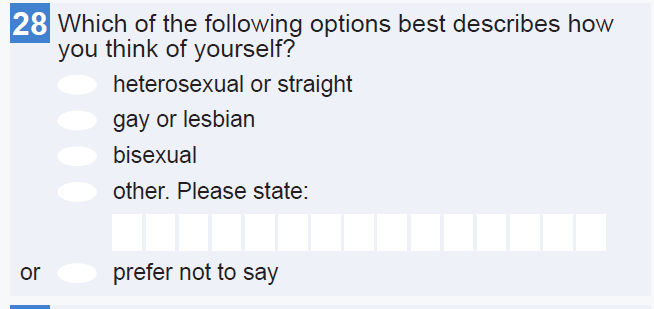

The question design tested in sprint 5 was:

SPRINT 5 – COGNITIVE TESTING AND MASS COMPLETIONS OF PAPER FORMS – FINDINGS

General population cognitive testing:

Uncomfortable answering in interview but happy to do it at home.

Most people said they were happy to answer, but wondered about whether this might

be difficult for others.

Privacy envelope – didn’t like idea as drawing attention to themselves – implying

something to hide.

Some respondents wondered why this information is needed

“what’s the point?”

Targeted LGBTQI testing:

Respondents felt this question was asking about sexual identity, attraction, orientation

etc.

Several respondents made use of the other, please specify option. A few respondents

found this question very difficult to answer: one is figuring this out; intersex respondent

struggled with this.

Some queried whether they could select multiple options.

Were mostly comfortable answering this in a census context. On respondent had

concerns about ‘flow on effects’ of this being asked more widely, data sharing.

CENSUS PROGRAMME TEST

July 2016

Online and Paper form

Aim: Primary

Test the proposed 2018 Census content on the public to see if question and response

options are suitable for inclusion in a self-complete form

Secondary

Learn if the inclusion of individual questions (new and changed content) impacts

responses to other questions and completion rates (respondent burden is managed) and

Ensure that question and response options; provide fit for use information to an

acceptable standard across all modes (paper, online – desktop and online – mobile) and,

have been tested appropriately with respondents so they find them easy to understand

and complete.

Give confidence that form content and design for proposed question and response options

can be processed, do not increase respondent burden, does not impact on extra

processing costs, meets expected quality.

Respondents The mass completion test participants were recruited via community groups mainly The People’s

Panel (Auckland) and organisations, including: secondary and tertiary students, Age Concern,

retirement village residents, and a private workplace.

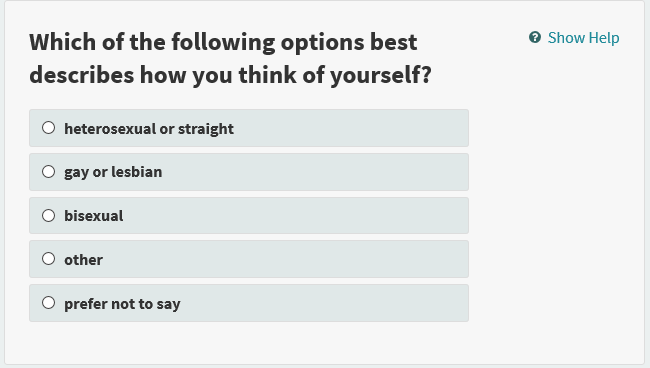

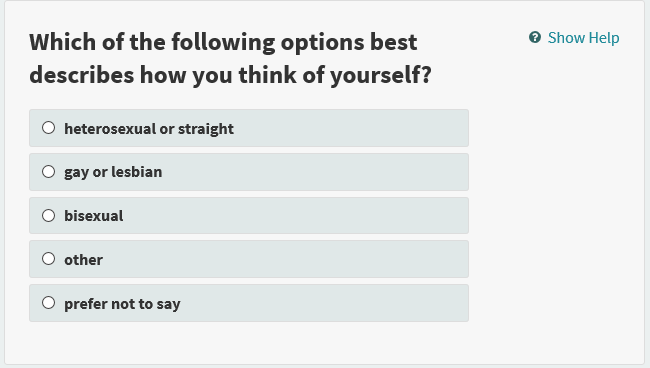

The question design tested in CPT was:

Online Form

SPRINT 7, COGNITIVE TESTING AND MASS COMPLETIONS OF PAPER FORMS

June/July 2016

Version 1 - Christchurch, Wellington

Version 2 - Napier

Aim:

Observe respondent attitudes to the presence of this question.

Observe any difference between “object to answer” as opposed to previously tested

“prefer not to say”.

Respondents The cognitive test participants included the general public, people with step family,

The mass completion test participants included: boarding school students, tertiary students, young

farmers, permanent residents at a holiday park, sports team, retirement village residents.

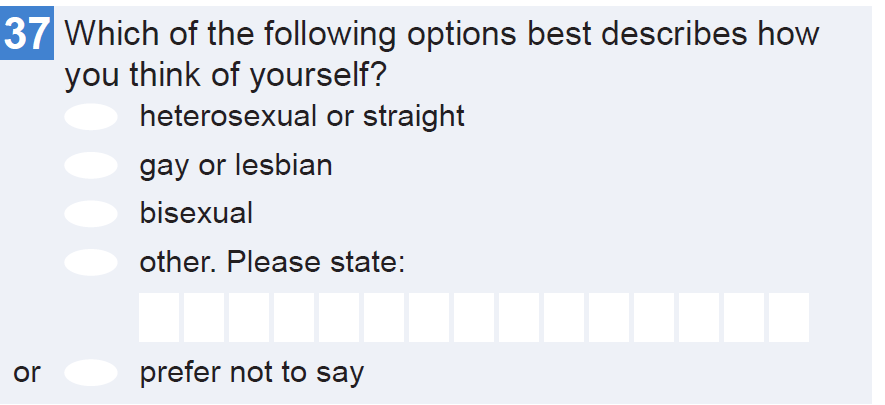

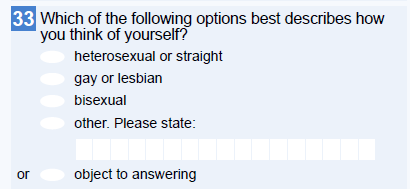

The question design tested in sprint 7 was:

SPRINT 7 – FINDINGS

Cognitive and group interview findings

There were no strong themes that emerged from the data for this question. Most

respondents answered the question with no difficulty and without comment. A few

respondents had some difficulty interpreting the response options and selecting their

response. One respondent sought clarification from the interviewer asking

“heterosexual/straight - that’s ‘normal’ isn’t it?” A couple of respondents noted the

‘object to answering’ category and commented that they would prefer a ‘prefer not to

say’ option, as they didn’t necessarily object to the question being asked in the form, but

they would personally prefer not to answer it.

Mass completion test findings

There were no significant issues from mass completion data.

SPRINT 7 – RECOMMENDATIONS

QMD recommends assessing data and respondent feedback from the Census Test (July

2016) for this question to further assess public sensitivity to the inclusion of this

question. The compliant respondent sample in this testing sprint combined with

interviewer presence may have masked any sensitivity toward this question which may

be expressed in other types of test environments.

QMD recommends that stakeholders consider the ‘prefer not to say’ versus the ‘object

to answering’ response categories and the explicit and implicit meaning that these

different response labels may convey to respondents. In addition QMD recommends

comparing Sprint 7 results which tested ‘object to answering’ with previous testing and

Census Test (July 2016) results to see if there are any observable effects introduced by

these two variations.

SPRINT 8, COGNITIVE TESTING AND MASS COMPLETIONS OF PAPER FORMS

July/August 2016

Wellington and Christchurch

Aims:

The Sexual Orientation variable had no specific testing objectives in this sprint.

Respondents

The cognitive test participants included renters, people with step family and parents with

children at home for proxy reporting. The mass completion test participants included

retirement village residents and members of the general public.

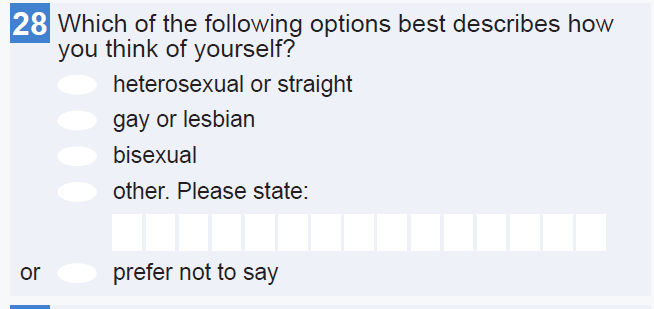

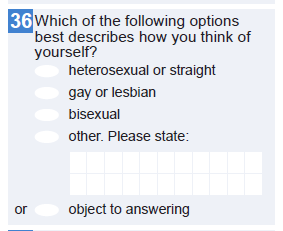

The question design tested in sprint 8 was:

SPRINT 9A, COGNITIVE TESTING AND MASS COMPLETIONS OF PAPER FORMS

July/August 2016

Wellington and Christchurch

Aims:

The Sexual Orientation variable had no specific testing objectives in this sprint.

Respondents The cognitive test participants included renters, people with step family and parents with

children at home for proxy reporting. The mass completion test participants included

retirement village residents and members of the general public.

The question design tested in sprint 9A was:

5 – Data quality

<Expectations based on testing, known issues, question interactions (suggested edits). To be

completed towards the end of QMD testing>

Appendix 1: testing methodology

Research objectives

The broad research objectives of testing may vary with each sprint, but generally are to:

Understand how well individual questions and key concepts/definitions are understood by

respondents

Understand how well individual questions and the overall form design enables respondents

to answer quickly and accurately

Understand how new and changed questions may impact on other questions in the forms

Understand respondent burden

Understand public attitudes to new and changed questions which may influence their

willingness to answer

Topics or questions may be allocated as a primary or secondary focus or not a focus of testing in a

given sprint. This depends on the priority of the variable itself and how well it has tested previously.

Desktop review (paper and online)

Questions and questionnaires (paper and/or online) are reviewed before they are tested with

respondents. The aim of desktop review is to:

Check whether the forms accurately match content and design specifications;

Identify any usability issues in the online forms (across a range of devices, operating systems

and browsers);

Identify any potential issues that should be subjected to further testing with the public.

Test participants

Testing aims to include people from a wide range of backgrounds, with a mix of age, sex, ethnicity,

income, employment status, etc. However an individual sprint may target respondents with

particular characteristics, for example, students, people who have children or stepfamily, Māori, or

tenure (renting, home owners, etc).

Test participants have been recruited using a variety of methods. These have included flyers posted

in public spaces such as libraries and YMCAs, Twitter and Facebook posts, contacting community

groups eg LGBQTI+, Step Family Network and the Retirement Village Association.

Testing methods

Three testing methods have been used, each with a different focus.

Cognitive testing

This is a qualitative, observational research method that helps identify problems with questionnaire

design. This methodology involves one-to-one interviews where respondents complete a

questionnaire. It uses techniques such as concurrent probing, retrospective probing and think-aloud

to highlight how respondents get to their answers and how they interpret certain terms.

Cognitive tests last around one hour, during which the first 30-40 minutes will involve the researcher

observing the respondent completing their dwelling form and individual form. The remaining 20-30

minutes will take a semi-structured interview approach. This time will be used to probe in-depth on

the focus questions described in this plan, which are relevant for respondents.

Mass completion + group interview

Mass completion tests involve asking a large group of respondents to complete a questionnaire

unobserved, in a supervised environment. Mass completion is a useful diagnostic tool to confirm

suspicions about a particular design or uncover unexpected reactions to questions using a larger

group of respondents.

Mass completion and group interview will last about one hour. In the first half of the session,

respondents will be asked to complete one or both Census forms. The remaining time will be used to

probe in-depth on the focus questions described in this plan, which are relevant for respondents.

The same semi-structured interview protocol can be used for cognitive testing and group interview.

Usability testing (online)

User testing involves one-to-one interviews where respondents complete a set of given tasks (e.g.

complete household set up page, complete Individual/Dwelling form) on a device ie a tablet,

smartphone or desktop. It is a qualitative, observational research method used to identify problems

with a user interface. User testing employs think-aloud, concurrent probing, and retrospective

probing techniques to understand how the design of the user interface impacts on the user

experience.

Analysis

From sprint 7 onwards, findings were coded to approximately 20 codes, which were further

summarised into themes:

Table: Analysis of testing findings – codes and themes used

Codes

Themes

Theme Description

Total nonresponse due to

Relates to how and why respondents

sensitivity

Sensitivity

perceive question content to be sensitive

Protest response

to themselves and other people.

Sensitivity is often based on the individual

Selection of ‘object to answer’

person’s personal experiences, worldview

response

and personal values and can affect their

Reluctant response

willingness to respond.

Sensitivity on behalf of others

Relates to the explicit or implicit value

Questioning why we ask

Value / Value +

judgements that respondents make about

a question and whether they perceive it

Questioning use of data

as having value, or not. Whether

Willingness to answer based on

respondents perceive a question to have

value judgement

value or not will affect both their

willingness to answer and the quality of

their response should they choose to

Positive comment volunteered

answer.

regarding info need

Difficulty in recalling the

Burden

Relates to the ease with which

requested information

respondents are able to answer questions

Difficulty in interpreting the

and the extent to which they have a

question

positive respondent experience. There

Difficulty in fitting their answer

are many aspects of respondent burden

into the response formats/

which respondents may experience when

categories

answering questions. Some of these arise

from ambiguous or unfamiliar terms or

Confusion or difficulties arising

concepts in the questionnaire, while

from interactions between

others may be a direct effect of the

questions

poorly designed question or form.

Effort required to answer

Missed routing instructions

Error

Relates to causes of respondent error

Instructions missed or incorrectly

that can affect data quality and reliability.

followed

Sources of error usually arise from poor

question and form design, but may also

Subjective response

include contextual factors specific to the

Proxy response error

respondent which can’t be controlled for.

Guesses

Poor question construction

Relates to respondent burden and error,

Dissatisfaction with

specifically arising from poor question

question/response options

Defective design and form design. A fundamentally

defective question or set of questions

may negatively impact on data quality

Visual design of form

and/or the user experience.

Testing collects information about people’s willingness and ability to answer. Not all of these

findings will result in alterations to the questionnaire, and any changes that are made may not

necessarily resolve the issues found.